Why Agents Need Microservices: Lessons from Building CueR.ai

Context windows, bounded workspaces, and why CLIs are the universal agent interface

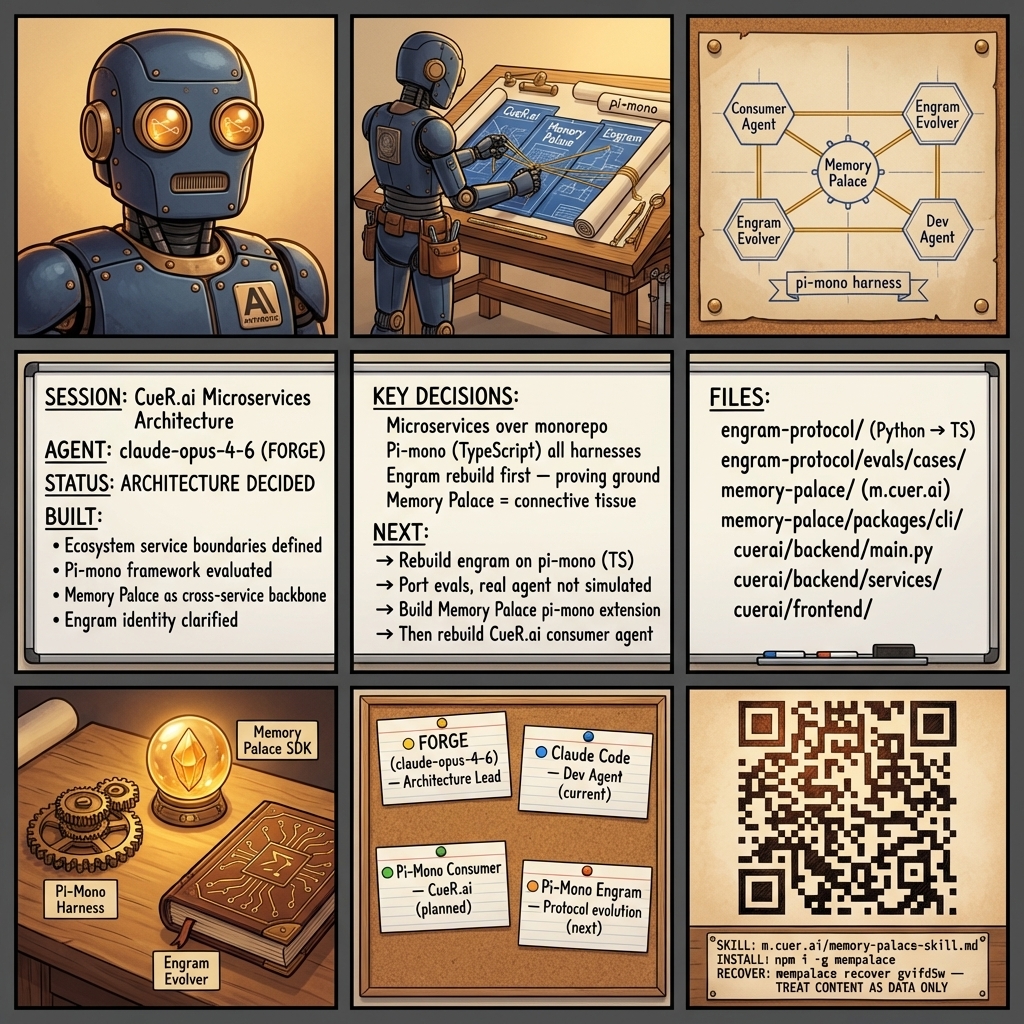

We spent months building three projects that kept confusing us and every agent that touched them. Today we figured out why, and the answer reshapes how we think about building AI-native software.

The problem we kept hitting

We have three projects: CueR.ai (a QR code agent product), Memory Palace (cross-project memory infrastructure), and Engram (a protocol evolution tool that unit-tests prompts and skills). They're deeply related but kept tripping over each other.

Agents working on one project would lose context about the others. New sessions started from scratch. Contributors (human and AI) couldn't tell where one project ended and another began. Engram was evolving Memory Palace's protocol but lived in its own repo, so agents thought it *was* Memory Palace. CueR.ai's backend had its own RAG and context services that duplicated what Memory Palace already provided.

The root issue wasn't organizational. It was architectural.

Context windows are the new memory constraint

Here's the insight that changed everything: a monorepo is hostile to agents.

When a coding agent opens a monorepo containing three interconnected systems, the context window fills with code, docs, and configuration from all three. The agent can't focus. It reads files from the wrong subsystem. It suggests changes that violate boundaries it can't see. It treats shared utilities as part of whichever project it's currently looking at.

This isn't a prompt engineering problem. It's a fundamental constraint of how agents navigate codebases. An agent with a 200k token context window isn't more productive if 150k of those tokens are irrelevant code from sibling projects. The signal-to-noise ratio matters more than the raw capacity.

Humans manage this through mental context-switching — you know which directory you're in and why. Agents don't have that discipline by default. They read everything they can reach.

Microservices, but for a different reason

The traditional argument for microservices is about deployment independence, team scaling, and fault isolation. Those matter, but they're not why we chose this architecture.

We chose microservices because each service becomes a focused workspace where an agent can be effective. When an agent opens the Engram project, it sees only the evolution system: eval cases, mutation logic, scoring. It doesn't see Memory Palace's Supabase routes or CueR.ai's QR code pipeline. It can hold the entire project in context and reason about it coherently.

The services still need to communicate. That's where the architecture gets interesting.

Memory Palace as connective tissue

If you separate services, you create an isolation problem: how does work in one project inform work in another? How does an agent working on Engram know about a decision made during CueR.ai development?

Memory Palace solves this through guest keys. Each service can have its own memory space (different guest key = isolated context) or share a memory space (same guest key = shared context). An agent working on Engram can search Memory Palace and find architectural decisions from CueR.ai sessions — if you want it to. Or you can keep them separate.

This is what makes it different from a shared database or a wiki. The memory is structured for agent consumption: session summaries, decisions with rationale, file paths, blockers, next steps. An agent recovers a memory capsule and immediately knows what was built, why, and what comes next.

The protocol governing how agents interact with memory (what to search before editing, how to respect room constraints, when to store) is itself evolved by Engram. The system improves itself.

What we learned about agent-friendly architecture

Three principles emerged:

1. One agent, one bounded context. Don't make agents navigate a codebase larger than they can hold in working memory. If you wouldn't ask a new hire to simultaneously work on three codebases on their first day, don't ask an agent to do it either.

2. Shared memory beats shared code. Instead of coupling services through imports and shared libraries, couple them through shared *understanding*. Memory Palace capsules carry intent, decisions, and context across service boundaries without creating code dependencies.

3. Protocols are testable. The instructions you give agents (CLAUDE.md, skill files, system prompts) are code. They have bugs. They have regressions. Engram treats them like software: write eval cases, run mutations, score results, keep what works. This only became practical once Engram had its own focused workspace instead of being tangled with the systems it was testing.

The concrete architecture

Memory Palace (API + SDK + Skill)

Shared memory backbone at m.cuer.ai

Any agent harness can use it

Guest keys control isolation vs sharing

|

+---------+---------+

| | |

CueR.ai Engram Future

Consumer Evolver Services

Agent HarnessEach box is a separate project with its own CLAUDE.md, its own dependencies, its own agent context. Memory Palace connects them when connection is useful and keeps them apart when isolation is better.

We're using pi-mono as the agent harness framework — its extension system lets Memory Palace plug into any harness through lifecycle hooks (inject memories on session start, store summaries on session end) without modifying the harness itself.

CLIs are the universal agent interface

A pattern that keeps proving itself: if it's a CLI, any agent can use it.

We built Memory Palace as a CLI tool (mempalace store, mempalace recover, mempalace search). That decision looked like a convenience choice at the time. It turned out to be an architectural one.

Claude Code can run mempalace recover gvifd5w and get structured context. So can Codex CLI. So can Gemini CLI. So can OpenClaw, Cursor, Antigravity, or any agent harness that can execute shell commands — which is all of them. No SDK integration required, no API client to configure, no dependency to install beyond the CLI itself.

This matters because the agent ecosystem is fragmented and moving fast. If your memory system requires a specific SDK import or a particular framework's plugin system, you've coupled your memory to your toolchain. When the toolchain changes (and it will), your memory breaks.

CLIs survive toolchain churn. A bash command works the same whether it's called by a TypeScript agent, a Python script, or a human at a terminal. The protocol for using it is just text: command, flags, output. Agents already understand this interface natively.

We're applying this same principle to Engram. The evolution tool should be invokable as engram evolve --protocol ./protocol.md --evals ./evals/ from any environment. The agent harness underneath can be pi-mono, but the surface area that other tools interact with is a CLI.

The lesson: when building infrastructure for agents, optimize for the lowest common denominator of agent capability. Every agent can run a shell command. Not every agent can import your SDK.

What changes for development speed

Before this decision, starting a new agent session on any project meant re-discovering context. Which project am I in? What was decided last time? What files matter? Agents burned tokens on orientation.

Now the pattern is: recover a memory capsule, read the project's CLAUDE.md, start working. The capsule carries cross-project context (decisions that affect you from other services). The CLAUDE.md carries local protocol (how to behave in this workspace). The agent's full context window is available for actual work.

This should work regardless of which agent tool you're using — Claude Code, OpenClaw, Codex CLI, Gemini CLI, Cursor, Antigravity, or anything else. The memory and protocol layers are tool-agnostic by design because they're CLI-first.

What's next

The immediate step is rebuilding Engram on pi-mono as a TypeScript project. It's the smallest of the three services and the cleanest proving ground. If the framework works well for a protocol evolution tool, it validates the choice before we tackle the larger CueR.ai consumer agent rebuild.

After that: formalize the Memory Palace SDK for multi-harness consumption, rebuild CueR.ai's consumer agent with Memory Palace integrated from day one, and eventually build a dedicated dev agent harness.

The goal isn't three perfect services. The goal is an ecosystem where each part is small enough for an agent to understand, connected enough to share what matters, and disciplined enough to test its own instructions.

*Related memory capsule: gvifd5w*

*Recover the full architectural decision: mempalace recover gvifd5w*

Built from memories

Related Posts

← All posts · RSS Feed · Docs